'Azure Durable Function: Fan Out vs. Parallel.ForEachAsync

I have to run a function on a list of items. I'm using Azure Durable Functions, and can run the items in parallel using their fan out/fan in strategy.

However, I'm wondering what the difference would exist between doing that vs. using the new Parallel.ForEachAsync method within a single Activity function. I need to be using Durable functions because this is an eternal orchestration which is restarted upon completion.

Solution 1:[1]

A Parallel.ForEachAsync is bound to one Function App instance. Which means it's bound to the resources that Function App has. When running in Consumption plan, this means 1 vCPU.

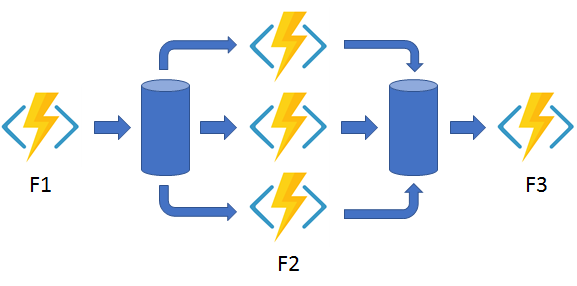

When using a Fan out / Fan in approach with Durable Functions, each instance of F2 (see image) is it's own Function App instance. Which in turn can use the entirety of the resources allocated to it.

In short: with a Fan out / Fan in approach, you are using (a lot) more resources. Potentially giving you a much faster result.

Chances are you would be best off with a combination of the two: batches of work dispatched to 'F2', that processes the batched work in a parallel way.

The fan-out work is distributed to multiple instances of the

F2function. The work is tracked by using a dynamic list of tasks.Task.WhenAllis called to wait for all the called functions to finish. Then, theF2function outputs are aggregated from the dynamic task list and passed to theF3function.The automatic checkpointing that happens at the

awaitcall onTask.WhenAllensures that a potential midway crash or reboot doesn't require restarting an already completed task.

Solution 2:[2]

To me the main difference is that Durable Functions will take care of failures/retries for you:

The automatic checkpointing that happens at the await call on Task.WhenAll ensures that a potential midway crash or reboot doesn't require restarting an already completed task.

In rare circumstances, it's possible that a crash could happen in the window after an activity function completes but before its completion is saved into the orchestration history. If this happens, the activity function would re-run from the beginning after the process recovers.

Solution 3:[3]

One more difference for the mix and for completeness; management of parallel activities.

With durable fan out/fan in you have a lot of built in management control.

Firstly, you can control the number of parallel processes easily with configuration in your host.json file, and secondly you can manage/monitor the progress of parallel activities with the management API.

The first can be helpful when you are accessing a resource that has limitations, such as a throttled API, so it is not inundated with too many requests or exceeds the efficiency of the API to handle parallel requests. The following hosts.json example limits the durable tasks to 9 concurrent activities:

{

"version": "2.0",

"functionTimeout": "00:10:00",

"extensions": {

"durableTask": {

"hubName": "<your-hub-name-here",

"maxConcurrentActivityFunctions": 9

},

"logging" : {

...

}

}

This will name your hub & limit concurrent activities.

Then for instance management you can query and terminate activity instances as well as send them messages and purge the instance history. This kind of functionality can be really useful if you need to know when activities have completed, especially if you need to know the success/fail status of each completed activity. This helps to reliably start downstream processes that rely on all activities being completed. Retries were mentioned by @Thiago; when using the built in retry mechanism the management API really comes into it's own to monitor running activities. It can be really useful in building solid resilience and handling down stream processes.

Sources

This article follows the attribution requirements of Stack Overflow and is licensed under CC BY-SA 3.0.

Source: Stack Overflow

| Solution | Source |

|---|---|

| Solution 1 | rickvdbosch |

| Solution 2 | Thiago Custodio |

| Solution 3 | robs |